Microsoft has decided that it will no longer allow companies to use an emotional, gender, or age rating tool with facial recognition technology. The company is also restricting access to other technologies that use artificial intelligence, including a synthesizer that allows you to create recordings that resemble the voice of a real person.

Microsoft’s decisions stem from a review of the ethics of using artificial intelligence (AI), called the Responsible Artificial Intelligence Standard. As reported, the actions taken will modify some of the functions available so far, while others will be withdrawn entirely from the sale.

Azure Face Limited

It will be limited, inter alia, to access to the Azure Face facial recognition tool. Until now, many companies, such as Uber, have used it as part of user identity verifications. According to the new rules, any company wishing to use the facial recognition feature will have to submit an application to use it. In the application, you will need to demonstrate that the company meets Microsoft’s ethical standards for using artificial intelligence and that the service will be used for the benefit of the end user and the community.

At the same time, the ability to use some of the most controversial features of Azure Face will be completely removed. Microsoft will withdraw, among other things, technology that allows the assessment of emotional states and identification of characteristics such as gender and age.

We collaborated with internal and external researchers to understand the limitations and potential benefits of this technology, and to reach trade-offs. When it comes to recognizing emotions in particular, these efforts have led us to important questions of privacy, disagreement over the definition of “emotion” and the inability to generalize the link between facial expressions and emotional state, said Sarah Bird, Microsoft product manager, quoted. by The British Guardian.

deep fake

Microsoft will also restrict access to Custom Neural Voice technology, the text-to-speech feature. This synthesizer allows you to create a synthetic voice that sounds almost identical to the voice of a chosen real person. This is due to concerns about using this technology to impersonate others and mislead the public. “With the advancement of technology that makes artificial speech indistinguishable from human voices, there is a risk of damaging deepfakes,” Microsoft’s Qinying Liao explains, citing the Guardian.

Read more: Technology that “resurrects” the dead, but also statues. This is a deep fake in a nostalgic version

However, the company is not completely abandoning the emotion recognition function. It will be used internally in accessibility tools such as Seeing AI. This tool attempts to describe the world in words to the needs of users with vision problems.

Read more: Microsoft chief: war in ukraine This is the first major hybrid war

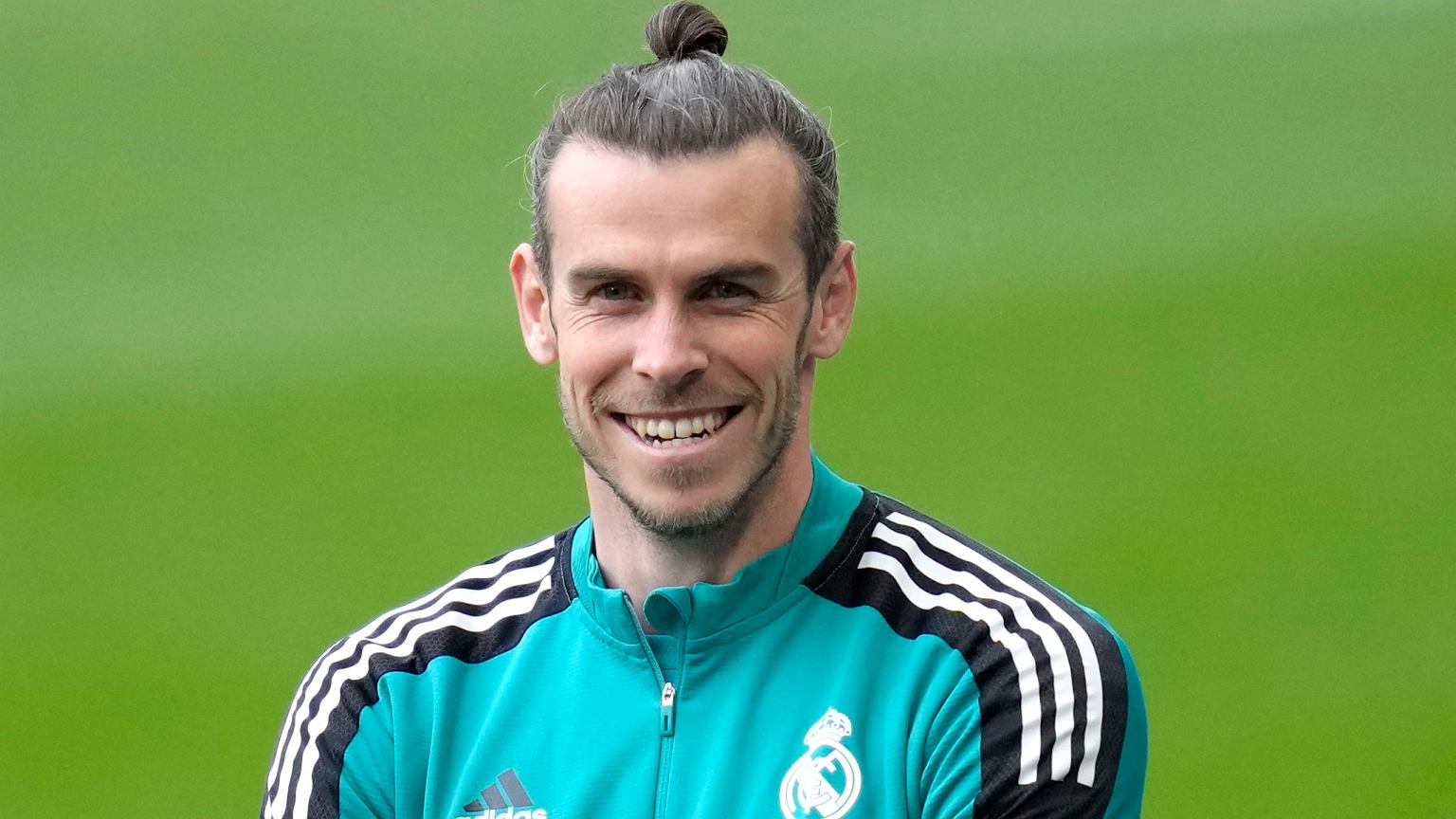

Main image source: VDB Images / Shutterstock